Your team just wrapped an impressive AI pilot. The demo wowed stakeholders. The proof of concept validated the technology. Everyone agreed it showed promise. Then nothing happened. Six months later, the project sits in limbo while your competitors ship real solutions. Sound familiar? You’re not alone. Recent research shows 95% of enterprise AI initiatives never make it past the pilot stage, and the reasons have little to do with the technology itself.

Enterprise AI pilot project failures stem from organizational issues, not technical limitations. Most pilots fail because they lack business integration, clear ownership, proper data governance, and realistic success metrics. Companies that build internal capabilities, anchor projects to specific workflows, and establish feedback loops see dramatically higher production rates than those purchasing off-the-shelf solutions.

The Real Numbers Behind AI Pilot Failure

MIT researchers found that only 5% of generative AI pilots at major enterprises successfully transition to production. That’s not a typo. Nineteen out of twenty projects stall, get shelved, or quietly disappear from roadmaps.

The gap widens when you look at how companies approach implementation. Organizations building AI capabilities internally achieve production rates around 15 to 20%. Those buying vendor solutions? Less than 2% make it through. The difference isn’t about budget or technical sophistication. It’s about understanding what actually blocks progress.

TCS CEO Krithivasan recently confirmed these patterns across thousands of enterprise clients. The failure rate holds steady regardless of industry, geography, or company size. What changes is how leadership frames the initiative from day one.

Why Pilots Succeed But Projects Fail

Pilots are designed to prove feasibility. They run in controlled environments with clean data, dedicated resources, and forgiving timelines. Production demands something entirely different.

Here’s what breaks when pilots try to scale:

- Isolated success doesn’t transfer to messy workflows where data quality varies and edge cases multiply

- Stakeholder enthusiasm fades when implementation timelines stretch from months to years

- Budget approvals stall because ROI calculations assumed perfect conditions that don’t exist in practice

- Technical debt accumulates as teams bolt AI onto legacy systems never designed for machine learning workloads

- Governance frameworks lag behind deployment speed, creating compliance bottlenecks that halt progress

The pilot proved the technology works. What it didn’t prove was whether your organization could actually operate it at scale.

Five Structural Problems That Kill AI Projects

Problem One: No Financial Owner

Most AI pilots report to innovation teams or technology groups. These teams excel at experimentation but lack budget authority for operational systems. When the pilot needs production infrastructure, security reviews, and ongoing maintenance costs, nobody has signing power.

Successful projects assign a P&L owner before the pilot starts. That person has skin in the game. They need the AI to work because it affects their division’s performance metrics. They’ll fight for resources because the project impacts their bonus.

Problem Two: Data Nobody Can Trust

Your pilot used curated datasets. Production needs to consume data from seventeen different systems, some running on infrastructure from 2008. The data is incomplete, inconsistently labeled, and occasionally contradictory.

Companies underestimate data preparation by 300 to 400%. What took two weeks in the pilot takes six months in production. By then, the original use case has changed and stakeholders have moved on.

| Data Challenge | Pilot Environment | Production Reality |

|---|---|---|

| Volume | 10,000 clean records | 47 million inconsistent entries |

| Update frequency | Static snapshot | Real-time streams from multiple sources |

| Quality control | Manual review | Automated with 15% error rates |

| Schema consistency | Single format | 23 different formats across divisions |

Problem Three: Technology Picked for Demos

Vendors optimize for impressive pilots. Their solutions work beautifully in controlled conditions. Then you try to integrate them with your SAP instance, your custom CRM, and that proprietary logistics system your company built in 2003.

The integration costs dwarf the license fees. The vendor’s professional services team quotes eighteen months. Your internal team has no capacity. The project enters what one CTO called “the valley of integration death.”

Problem Four: Success Metrics That Don’t Scale

Pilots measure technical performance. Did the model achieve 94% accuracy? Yes. Can it process 1,000 transactions per second? Absolutely. Will it reduce customer service costs by 30%? Nobody actually knows.

Production needs business metrics tied to real outcomes. Cost per transaction. Revenue per user. Time to resolution. Customer satisfaction scores. These metrics require instrumentation, baselines, and control groups that most pilots never establish.

Problem Five: No Feedback Loops

Your pilot ran for three months with a fixed dataset. Production systems need continuous learning. User behavior changes. Market conditions shift. Regulations update. The model that worked in Q2 degrades by Q4 unless someone actively maintains it.

Companies that succeed build persistent learning systems from day one. They instrument everything. They establish review cycles. They assign teams to monitor model drift and retrain when necessary. This operational overhead surprises organizations that thought AI was a “set it and forget it” technology.

The Build Versus Buy Trap

Here’s the uncomfortable truth about vendor solutions. They work for the vendor’s ideal customer. That customer has clean data, standard processes, and use cases that match the product roadmap. You probably aren’t that customer.

Companies building internal AI capabilities face a steeper learning curve. They make more mistakes early. But they develop organizational knowledge that transfers across projects. They build systems that fit their actual workflows instead of reshaping workflows to fit purchased software.

The numbers bear this out. Internal builds reach production at 10 times the rate of vendor purchases. The projects that do make it through deliver better business outcomes because they solve actual problems instead of theoretical ones.

This doesn’t mean never buy. It means understanding that purchasing AI tools without building internal capability is like buying a gym membership without learning to exercise. The equipment alone won’t make you fit.

How to Structure Projects That Actually Ship

Let’s get practical. Here’s what works based on organizations that consistently move AI from pilot to production.

1. Start with the business problem, not the technology

Identify a specific workflow that costs real money or loses real revenue. Quantify the current state. Define what success looks like in business terms. Only then evaluate whether AI helps.

A Singapore logistics company wanted to “use AI for optimization.” That’s not a project. They refined it to “reduce container repositioning costs by 15% within six months.” That’s actionable. They knew exactly what to measure and when to declare success or failure.

2. Assign a business owner with budget authority

This person should run a division that benefits from the AI. They need P&L responsibility. They should care more about business outcomes than technical elegance.

The technical team builds the system. The business owner defines requirements, secures resources, and removes organizational blockers. When budget questions arise, they have answers. When priorities conflict, they make calls.

3. Build minimum viable instrumentation first

Before you train a single model, set up the infrastructure to measure what matters. What’s the baseline performance? How will you track changes? What data do you need to collect?

One retail bank spent four months building their measurement framework before launching an AI pilot for loan approvals. The pilot itself took six weeks. They reached production in three months because they knew exactly whether the system worked and could prove it to regulators.

4. Plan for data reality from day one

Assume your production data is messier than you think. Budget 3x what you estimated for data preparation. Identify data quality issues during the pilot and fix the upstream systems that create them.

A manufacturing firm discovered their sensor data had 18% missing values. Instead of working around it in the pilot, they fixed the sensor network. The AI project took longer to launch but worked reliably in production because it had trustworthy inputs.

5. Treat the pilot as training for your team

The pilot’s real value isn’t proving the technology works. It’s teaching your organization how to operate AI systems. Document everything. Build runbooks. Train operators. Establish escalation procedures.

Companies that view pilots as learning exercises build organizational muscle. Those that view pilots as vendor evaluations stay dependent on external expertise and struggle when real problems emerge.

“The difference between companies that ship AI and those that don’t comes down to organizational readiness, not technical capability. You can buy the best models in the world, but if your company can’t operate them, they’ll never leave the pilot phase.” — Enterprise AI deployment consultant

Why Governance Kills Projects (And How to Fix It)

Nobody starts an AI project planning to get stuck in compliance review. But regulatory requirements, security concerns, and risk management processes create bottlenecks that pilots never encounter.

Your pilot ran on test data that contained no personally identifiable information. Production needs access to real customer records. That triggers privacy reviews, security assessments, and legal approvals. Each gate takes weeks or months.

Smart organizations run governance in parallel with development, not sequentially. They involve compliance teams during pilot design. They document security controls as they build them. They create approval workflows that assume AI systems will need regular updates, not one-time sign-offs.

A financial services company reduced their governance timeline from nine months to six weeks by embedding their chief privacy officer in the AI project team. She shaped the system design to meet regulatory requirements instead of reviewing it after the fact.

The Regional Patterns Nobody Talks About

Singapore and Nordic countries see higher AI production rates than other regions. The difference isn’t technical sophistication or bigger budgets. It’s organizational culture around experimentation and acceptable failure.

Organizations in these regions treat pilots as genuine experiments. They expect some to fail. They reward teams for learning and sharing insights, not just for shipping products. This psychological safety lets teams kill bad projects early instead of dragging them toward production to avoid admitting failure.

Contrast this with cultures where failed pilots damage careers. Teams in these environments optimize for impressive demos and positive reports, not honest assessments. They keep zombie projects alive long past their useful life. Resources get trapped in initiatives everyone knows won’t ship but nobody can officially cancel.

The fix isn’t cultural transformation. It’s explicit project review criteria established before pilots start. Define what success looks like. Define what failure looks like. Commit to killing projects that hit failure criteria regardless of sunk costs. This clarity lets teams move fast and redirect resources to better opportunities.

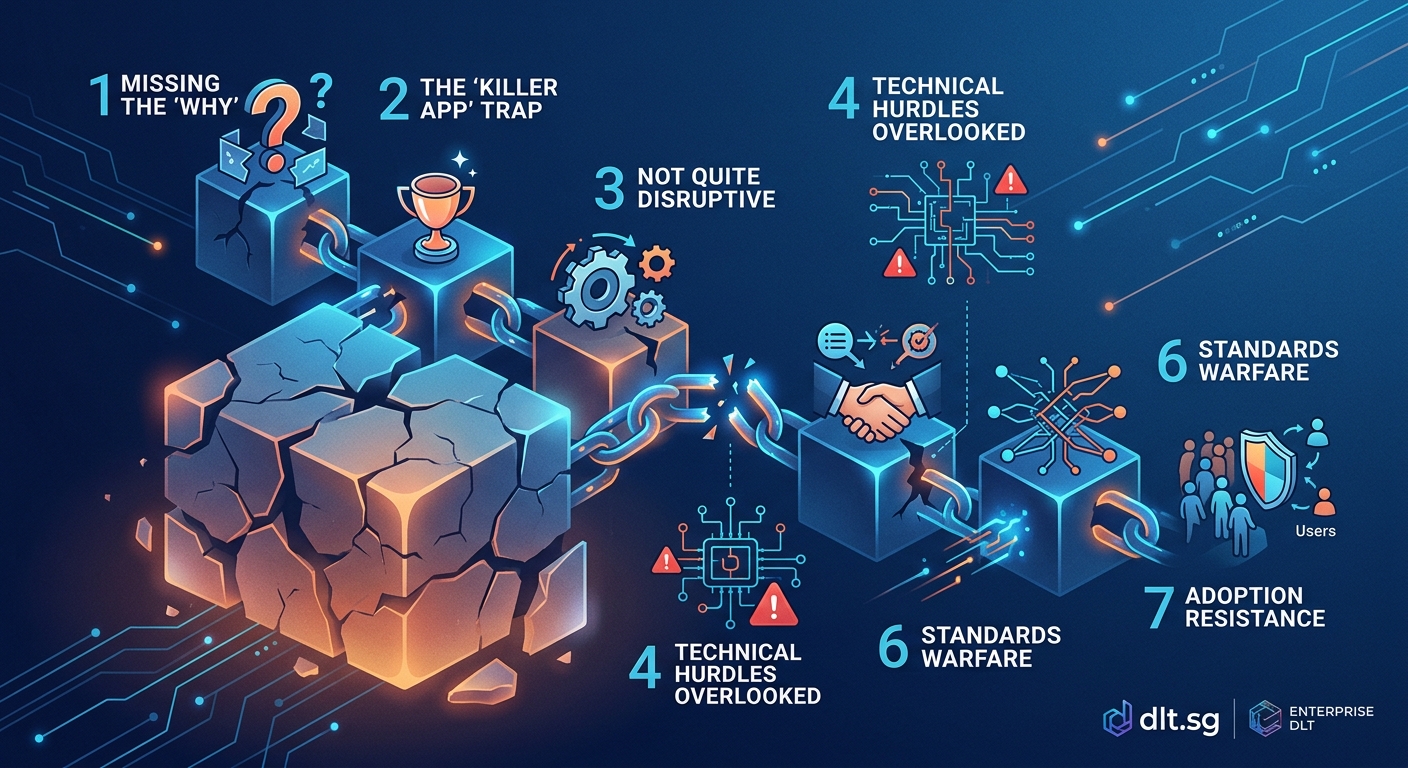

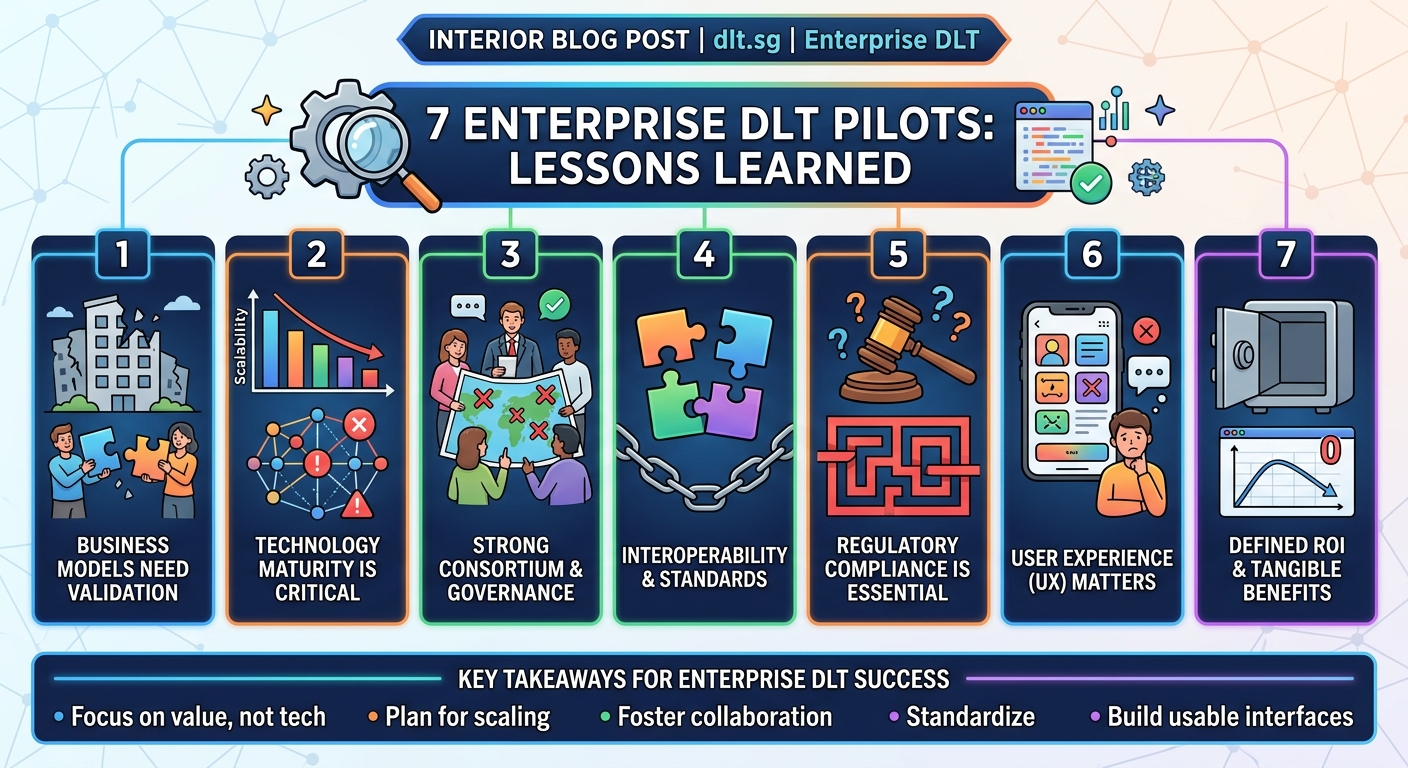

What Distributed Ledger Projects Teach Us About AI Pilots

The patterns behind enterprise AI pilot project failures mirror what happened with blockchain initiatives five years ago. Companies ran impressive proofs of concept that never reached production for identical reasons.

Distributed ledgers promised to transform supply chains, financial settlement, and identity management. Pilots showed technical feasibility. Then projects stalled because organizations hadn’t solved for data governance, established clear ownership, or integrated with existing systems.

The successful blockchain deployments shared common traits with successful AI projects. They started with specific business problems. They had executive sponsors with budget authority. They built internal expertise instead of relying entirely on vendors. They planned for production constraints during pilot design.

Understanding which architecture fits your business needs matters as much for AI as it did for distributed ledger technology. The wrong architecture choice during pilots creates technical debt that blocks production deployment.

Making Your Next Pilot Different

You’ve read about why projects fail. Here’s your action plan for the next AI initiative.

Before you start:

- Identify the business owner who will fund production deployment

- Define success metrics in business terms, not technical benchmarks

- Budget 3x your estimate for data preparation and cleaning

- Establish governance review processes that run in parallel with development

- Decide your kill criteria and commit to using them

During the pilot:

- Instrument everything to establish baselines and measure changes

- Use production-quality data, not sanitized test sets

- Document operational procedures as you build them

- Train your internal team to operate and maintain the system

- Review progress against business metrics weekly

Before declaring success:

- Validate that your success metrics actually moved

- Confirm the business owner will fund production deployment

- Verify that production data quality matches pilot assumptions

- Test integration with all required enterprise systems

- Ensure your team can operate the system without vendor support

This framework won’t guarantee success. But it eliminates the most common failure modes and gives your project a realistic shot at production.

Moving From Proof of Concept to Proof of Value

The technology works. That’s not your problem. Your problem is organizational readiness to operate AI systems at scale.

Start smaller than you think necessary. Pick one workflow. Solve one problem. Measure one outcome. Build the muscle memory of taking AI from pilot to production before you tackle transformational initiatives.

The companies succeeding with AI aren’t the ones with the biggest budgets or the fanciest models. They’re the ones that learned to ship. They fail fast, learn constantly, and apply those lessons to the next project. They treat AI as an operational capability to develop, not a magic solution to purchase.

Your next pilot can be different. Make it about learning how to operate AI, not just proving it works. The technology will take care of itself. Your organization’s ability to use it is what determines whether you join the 5% that ship or the 95% that stall.